Supabase pgvector Alternative: When to Move Vector Search to Managed PostgreSQL

Supabase is a great platform. For many teams, it is the fastest way to build an application with Postgres, auth, storage, realtime, edge functions, row-level security, and a clean developer experience.

So this is not a "Supabase is bad" article.

The question is narrower: when your pgvector workload becomes important, should it stay inside Supabase, or should vector-heavy PostgreSQL move to a focused managed provider?

The answer depends on what you are buying. If the value is the full application platform, Supabase can be the right place to stay. If the value is predictable vector search performance on PostgreSQL, a focused managed pgvector provider can be a better fit.

Keep Supabase when the platform is the value

Stay on Supabase if you rely on:

- Auth.

- Storage.

- Realtime.

- Edge functions.

- Dashboard workflows.

- Row-level security policies tightly coupled to Supabase services.

- A simple all-in-one developer platform.

- A single product for early application development.

If your vector search is small, low-volume, or non-critical, Supabase pgvector is often good enough. That is especially true when the embedding table is still small enough to fit comfortably in memory and the app is not sensitive to p95 or p99 search latency.

Supabase can also be the best choice when your team wants speed of development more than workload specialization. For many products, that is the correct tradeoff.

Consider an alternative when pgvector becomes the workload

The calculation changes when pgvector is no longer a side feature.

Watch for these signs:

- Vector queries are a large share of database load.

- HNSW indexes are larger than expected.

- p95 or p99 latency is unstable.

- Compute add-ons are becoming the main cost.

- You need more predictable monthly pricing.

- You want deeper help with HNSW tuning.

- You are running RAG or semantic search in the critical path.

- You need to benchmark storage behavior, not just CPU.

- Your app runs multiple vector searches per user request.

At that point, you are not really buying an app platform. You are buying vector search performance on PostgreSQL.

Why storage matters for Supabase pgvector alternatives

pgvector HNSW indexes are random-read heavy. If the hot index pages fit in memory, almost any decent Postgres setup feels fast. When the index grows beyond cache, the storage layer starts to matter.

That is why a focused provider like Rivestack uses dedicated NVMe nodes for pgvector workloads. The goal is not to change your SQL. The goal is to give the same SQL better and more predictable storage behavior when the index is too large to remain fully cached.

This matters most for:

- Large document search.

- Multi-tenant RAG.

- Recommendation systems.

- Semantic search APIs.

- Retrieval flows that run several vector queries per request.

- Workloads where p99 latency affects user experience.

For the storage details, see pgvector on NVMe vs cloud SSDs.

Cost model: platform pricing vs workload pricing

Supabase pricing makes sense when you want the whole platform. You are paying for a developer experience and a bundle of capabilities, not only a Postgres node.

For vector-heavy workloads, ask what you are actually paying for:

- Base plan.

- Compute size.

- Storage.

- Backups.

- Network.

- Add-ons.

- Any usage-based components.

- Extra environments and branches.

The important question is not "which provider is cheapest today?" It is "what happens when vector queries double, the HNSW index grows, and the product starts using retrieval in more places?"

Rivestack prices by node. That makes the cost easier to model when your workload has many vector queries per user request. Fixed pricing is especially useful when search traffic is unpredictable or when retrieval is part of every AI response.

HNSW tuning differences to evaluate

Supabase exposes PostgreSQL, which means pgvector works through standard SQL. For many workloads, that is enough.

When vector search becomes central, you should also ask:

- Can I tune

hnsw.ef_searchper workload? - Can I rebuild indexes with different

mandef_constructionvalues? - Can support help inspect

EXPLAIN ANALYZEfor filtered vector queries? - Do I have enough memory for the HNSW index and ordinary PostgreSQL cache?

- What happens during extension upgrades?

- How do I test recall before and after tuning?

Tuning is not about blindly increasing every setting. Higher recall often costs more latency. Faster builds can produce lower-quality indexes. More memory can help, but only if the bottleneck is cache pressure. A focused managed pgvector provider should help you make those tradeoffs deliberately.

For a practical tuning checklist, read pgvector HNSW tuning on managed PostgreSQL.

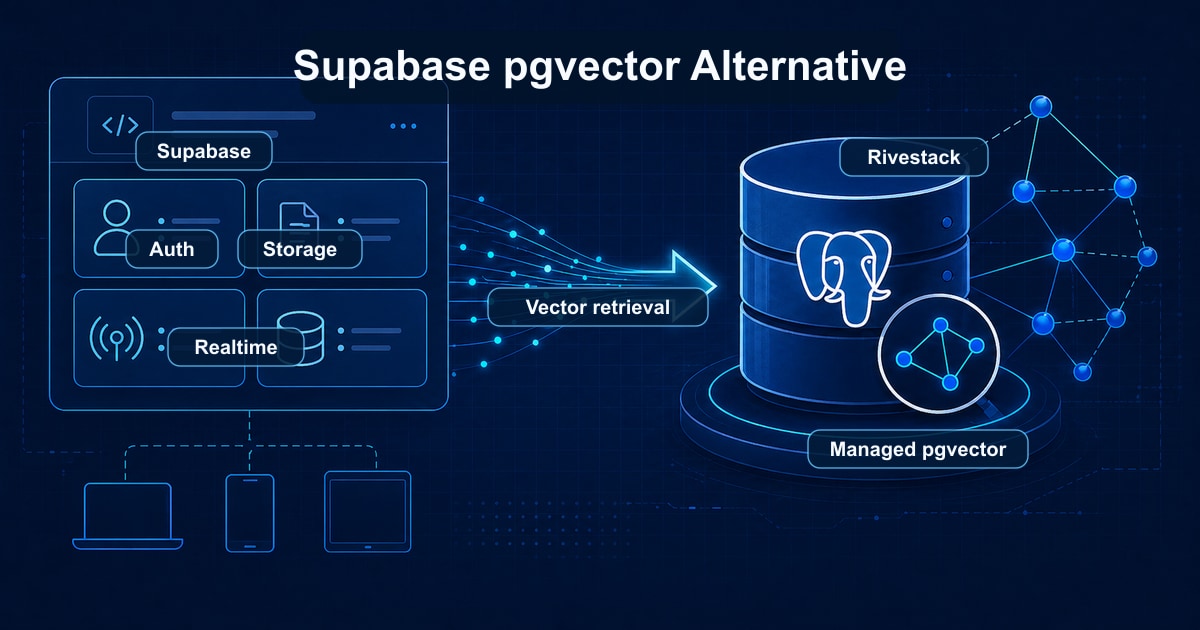

Architecture option: keep Supabase, move vector retrieval

Moving pgvector away from Supabase does not always mean leaving Supabase.

Many teams keep Supabase for:

- Auth.

- User management.

- Storage.

- Realtime features.

- Admin workflows.

- Smaller relational tables.

Then they move the vector-heavy retrieval database to a focused managed PostgreSQL provider. The application keeps Supabase where it is strongest and sends embedding search queries to a dedicated pgvector database.

This architecture works best when the vector data has a clear boundary. For example, documents, chunks, embeddings, and retrieval metadata can live in the pgvector database, while users, teams, billing, and app settings remain in Supabase.

It is less attractive when every query depends on complex joins across both databases. In that case, the cost of splitting data can be higher than the benefit.

Migration path from Supabase pgvector

Because Supabase is PostgreSQL, migration is straightforward in most cases:

- Inventory extensions, tables, indexes, policies, functions, triggers, roles, and background jobs.

- Estimate vector table size and HNSW index size.

- Decide which tables move and which remain in Supabase.

- Choose

pg_dumpandpg_restorefor small databases or logical replication for lower-downtime cutovers. - Recreate connection strings and application secrets.

- Rebuild or validate HNSW indexes on the target database.

- Compare recall, latency, and query plans before switching traffic.

- Keep a rollback plan for the cutover window.

If you still want Supabase for auth and app services, keep it. The database used for vector-heavy retrieval can move independently when your architecture allows it.

When not to move

Do not move away from Supabase pgvector just because another provider has a faster benchmark.

Stay put when:

- Your vector table is small.

- Latency is already good.

- The bill is predictable.

- Your team depends heavily on Supabase workflows.

- Splitting the database would complicate the product.

- You do not have a clear retrieval boundary.

Infrastructure should get simpler when you change providers. If it gets more complex without solving a real bottleneck, wait.

Supabase pgvector alternative checklist

Use this checklist when comparing options:

| Area | What to verify |

|---|---|

| SQL compatibility | Standard PostgreSQL and pgvector queries keep working |

| Storage | Random-read behavior is appropriate for HNSW |

| Memory | Plans leave room for index growth |

| Tuning | HNSW settings can be explained and adjusted |

| Cost | Pricing remains predictable as search volume grows |

| Backups | Restore testing includes vector-heavy tables |

| Migration | The provider can help plan dump, restore, replication, and cutover |

| Operations | Monitoring includes latency, cache, connections, and disk pressure |

Rivestack is designed around that checklist for managed pgvector workloads.

FAQ

Is Supabase pgvector good for production?

It can be. Supabase is a capable PostgreSQL platform, and pgvector can be used in production there. The question is workload fit. If vector search becomes latency-sensitive, expensive, or hard to tune, compare it with a focused managed pgvector provider.

Do I need to rewrite my application to migrate?

Usually not if you already use standard PostgreSQL and pgvector SQL. You may need to change connection strings, secrets, deployment config, and some operational workflows. If you split Supabase and pgvector across two databases, you may also need to adjust data access boundaries.

Can I keep Supabase auth?

Yes. You can keep Supabase for auth and app services while moving retrieval-heavy pgvector tables to another PostgreSQL database, as long as your application can route those queries separately.

Why migrate away from Supabase pgvector?

The most common reasons are cost predictability under growth, vector query latency on cache misses, and the ability to tune PostgreSQL specifically for vector workloads. Supabase shines when its auth, storage, realtime, and edge functions are part of the value. When the database is the latency-sensitive part of the system, a focused managed pgvector service usually delivers better performance per dollar.

Is Supabase pgvector slow?

Not inherently. Supabase runs standard pgvector on PostgreSQL. Where teams hit issues is storage latency on cache misses, compute add-on costs at higher tiers, and limited workload-specific tuning help. For prototypes and smaller indexes it performs well; for high-QPS production HNSW search, dedicated NVMe-backed hosting typically wins.

How much does Supabase pgvector cost?

Supabase pricing combines a base plan with compute add-ons that scale with the database size and CPU. Total cost depends on the compute tier, storage, included bandwidth, and any add-ons. For production vector workloads at meaningful scale, the compute add-ons usually become the dominant line item — compare against fixed-price node plans before committing.

How do I migrate pgvector from Supabase?

Use pg_dump and pg_restore for smaller databases, or logical replication for always-on workloads. Tables, indexes, extensions, and roles are reviewed before cutover. Application changes are usually limited to the connection string. Most migrations complete in 30 to 60 minutes of actual database work.

Bottom line

Supabase is a strong default for building apps. A focused managed pgvector provider is a stronger fit when vector search has become a production database workload.

If your Supabase pgvector database is getting expensive, slow, or hard to tune, compare it with Rivestack managed pgvector.