pgvector HNSW Tuning on Managed PostgreSQL

HNSW is usually the right pgvector index for production semantic search. It gives a strong speed and recall tradeoff, works before the table is fully loaded, and performs well for RAG and recommendation workloads.

But HNSW is not magic. On managed PostgreSQL, you still need to understand the knobs, the memory profile, the storage layer, and the way filters change query behavior.

This guide explains the main HNSW settings and how to evaluate them in a managed pgvector environment.

Start with the baseline query

Most teams start with a query like this:

SELECT id, content

FROM documents

ORDER BY embedding <=> $1

LIMIT 10;That is a good baseline, but it is rarely the production query.

Real applications usually add filters:

SELECT id, content

FROM documents

WHERE account_id = $2

AND status = 'published'

AND language = 'en'

ORDER BY embedding <=> $1

LIMIT 10;HNSW tuning should be done against production-shaped queries. If you tune against a clean benchmark query and deploy into a heavily filtered workload, the results may not match.

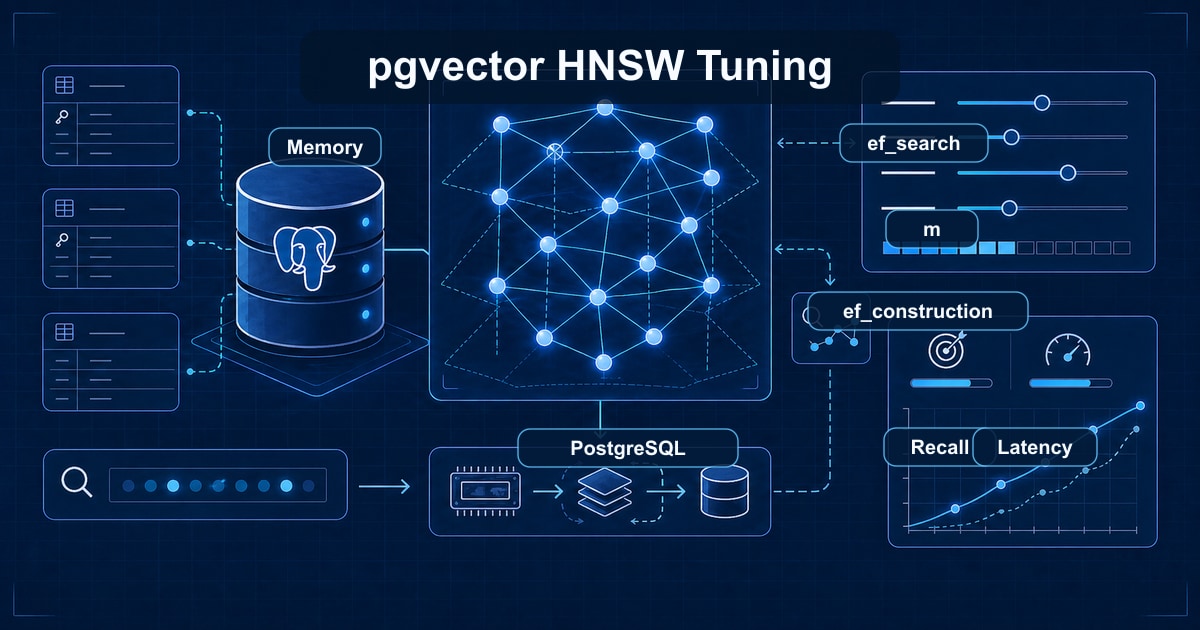

The main HNSW settings

There are three settings most teams should know.

m

m controls the maximum number of connections per graph layer. Higher values can improve recall, but they make the index larger and slower to build.

The default is a reasonable starting point. Increase it only when recall is not good enough and you have memory headroom.

When considering a higher m, ask:

- How much larger will the index become?

- Will the hot index pages still fit in memory?

- Does recall improve enough to justify the extra cost?

- Does the provider allow enough maintenance memory for the build?

ef_construction

ef_construction controls how much work HNSW does while building the graph. Higher values can improve recall, but index creation takes longer.

For large imports, tune this before building the index, not after. Changing build-time parameters usually means rebuilding the index.

Use higher values when:

- Recall is not good enough with defaults.

- You can tolerate longer index builds.

- You have enough maintenance memory.

- The index is central enough to justify the extra build cost.

hnsw.ef_search

hnsw.ef_search controls how many candidates are considered at query time. Higher values usually improve recall and result quality, but increase query latency.

This is the setting you are most likely to adjust per workload.

For example, a user-facing search API may use a moderate value to keep latency low. A background enrichment job may use a higher value because quality matters more than response time.

In PostgreSQL, query-time settings can often be adjusted per session or transaction, depending on how the application and provider are configured. Your managed provider should be able to explain the safest way to apply it.

Tune for recall and latency together

Do not tune HNSW only for speed. The useful target is speed at acceptable recall.

A practical process:

- Build a small set of representative queries.

- Run exact search or a trusted baseline for comparison.

- Measure recall at the result count you actually use.

- Measure p50, p95, and p99 latency under concurrency.

- Increase

hnsw.ef_searchuntil recall is acceptable. - Stop when extra recall is not worth the latency cost.

Recall is product-specific. A support chatbot might need different recall than a recommendation carousel. A legal search product might need stricter quality than an internal knowledge base.

Filters change everything

Filters are the most common reason a pgvector benchmark does not match production.

Suppose a query filters by account:

WHERE account_id = $2

ORDER BY embedding <=> $1

LIMIT 10;If the account contains only a tiny slice of the table, PostgreSQL has to combine vector similarity with selectivity. Depending on table shape, the best plan may need:

- A B-tree index on

account_id. - A composite or partial index for common filters.

- Partitioning by tenant or time.

- Higher

ef_searchto find enough candidates after filtering. - Query rewrites that narrow the candidate set first.

This is why managed pgvector support should include normal PostgreSQL expertise. Vector search does not replace relational query planning; it sits beside it.

Watch memory before chasing CPU

Embedding tables get large quickly. HNSW indexes add more memory pressure.

Before upgrading CPU, check:

- HNSW index size.

- shared buffers.

- cache hit ratio.

- work memory.

- maintenance memory.

- active connections.

- whether the hot index pages fit in memory.

- whether ordinary filters have their own indexes.

If the index is much larger than memory, storage latency becomes part of query latency. More CPU will not fix a random-read bottleneck.

Storage latency matters

HNSW graph traversal performs random reads. Local NVMe behaves differently from general-purpose cloud block storage when the index does not fit in cache.

That does not mean every workload needs NVMe. Small indexes that fit in memory will perform well on many providers. NVMe matters most when the vector count grows and the workload is latency-sensitive.

See the full benchmark: pgvector on NVMe vs cloud SSDs.

Build indexes after bulk loading

For large datasets, load data first and build the HNSW index afterward. This is usually faster and easier to monitor than maintaining the index during a bulk import.

Also check:

maintenance_work_mem.- parallel maintenance workers.

- disk space during index build.

- whether the provider allows the required settings.

- whether index creation should run during a maintenance window.

- whether the build can be tested on a restored copy first.

If the table is already live, plan the index build carefully. Index creation can consume memory, CPU, and disk. A managed provider should help choose a safe window and confirm rollback options.

Use EXPLAIN ANALYZE

You cannot tune what you cannot see.

For important queries, capture:

EXPLAIN (ANALYZE, BUFFERS)

SELECT id, content

FROM documents

WHERE account_id = $2

ORDER BY embedding <=> $1

LIMIT 10;Look for:

- Whether the HNSW index is used.

- How many buffers are read from cache versus disk.

- Whether filters are applied early or late.

- Whether a regular index would reduce work.

- Whether execution time is stable across repeated runs.

BUFFERS output is especially useful because it shows whether query time is being driven by cache hits or physical reads.

Managed provider questions to ask

Before choosing pgvector hosting, ask:

- Which pgvector version is supported?

- Can I adjust query-time HNSW settings?

- Can support help inspect

EXPLAIN ANALYZEoutput? - What happens during extension upgrades?

- Are backups tested with large vector tables?

- Is the storage layer appropriate for random reads?

- How are large index builds scheduled?

- How do you recommend testing recall?

- What monitoring is available for cache, disk, CPU, and connections?

Those answers matter more than a checkbox saying "pgvector supported".

Common tuning mistakes

Avoid these patterns:

- Increasing

ef_searchwithout measuring recall. - Increasing

mwithout checking index size. - Benchmarking without production filters.

- Testing only warm-cache performance.

- Ignoring p99 latency.

- Rebuilding large indexes without a maintenance plan.

- Treating vector search as separate from ordinary PostgreSQL tuning.

- Comparing providers without matching memory, storage, and concurrency.

Good tuning is boring and measured. Change one thing, test it, and keep the result only if it improves the product metric that matters.

A practical tuning workflow

Use this flow for a new production pgvector workload:

- Create the table and vector column with the correct dimension.

- Load a representative dataset.

- Add ordinary indexes for common filters.

- Build the HNSW index with default settings.

- Establish an exact-search or trusted-quality baseline.

- Run production-shaped queries under realistic concurrency.

- Tune

hnsw.ef_searchfor recall and latency. - Revisit

mandef_constructiononly if recall is still insufficient. - Check memory and storage behavior.

- Document the chosen settings and when to revisit them.

If you are still choosing a provider, use the workflow as a benchmark plan. A good managed pgvector provider should be comfortable helping you run it.

FAQ

Should every pgvector workload use HNSW?

Not every workload, but HNSW is usually the production default for fast approximate nearest-neighbor search. Exact search can be fine for small tables. IVFFlat can be useful in some cases, but HNSW is often easier to operate for evolving datasets.

What should hnsw.ef_search be?

There is no universal value. Start with the default, measure recall and latency, then increase it if result quality is not good enough. Stop when extra quality is not worth the latency cost.

Does more RAM always fix pgvector latency?

No. More RAM helps when cache pressure is the bottleneck. If the query plan is poor, filters are missing indexes, ef_search is too high, or concurrency is saturating CPU, more RAM may not solve the problem.

What are good values for HNSW m and ef_construction?

The pgvector defaults (m = 16, ef_construction = 64) are reasonable starting points for most 1536-dimension workloads. Higher m and ef_construction improve recall and increase index size, memory use, and build time. Tune by measuring recall against your dataset, not by copying values from a blog post.

How long does an HNSW index build take?

It is roughly linear in row count and proportional to m × ef_construction. As a rough order of magnitude, a 1M × 1536-dimension index with default parameters takes minutes on a well-sized node, and tens of millions of rows take longer. Build the index after bulk loading data; inserting into an existing HNSW index is much slower than building from scratch.

Why is my pgvector HNSW query slow?

Common causes: the index is not actually being used (check EXPLAIN), ef_search is set too high, the index spilled out of cache and storage latency is dominating, filters are missing supporting B-tree indexes, the query is missing LIMIT, or concurrency is saturating CPU. Start with EXPLAIN (ANALYZE, BUFFERS) and measure cache hit ratio before adding hardware.

Should I use HNSW or IVFFlat?

HNSW is the production default for evolving datasets — better recall at low latency, no retraining when data changes. IVFFlat can be a good fit for static datasets where you want a smaller index and a faster build, and you are willing to retrain lists when the data shifts meaningfully. You can mix both index types in the same database.

How do I monitor pgvector index health?

Track index size with pg_relation_size, cache hit ratio for the index pages, query latency percentiles, recall against a labeled validation set, and EXPLAIN plans for representative queries. On a managed service, those metrics should be exposed through the provider's monitoring; on self-hosted setups, wire them into Prometheus or similar.

Bottom line

Good HNSW tuning is workload-specific. Start with defaults, measure recall and latency, then tune ef_search, filters, memory, and storage.

If you want managed PostgreSQL where pgvector tuning is part of the product, see Rivestack managed pgvector.