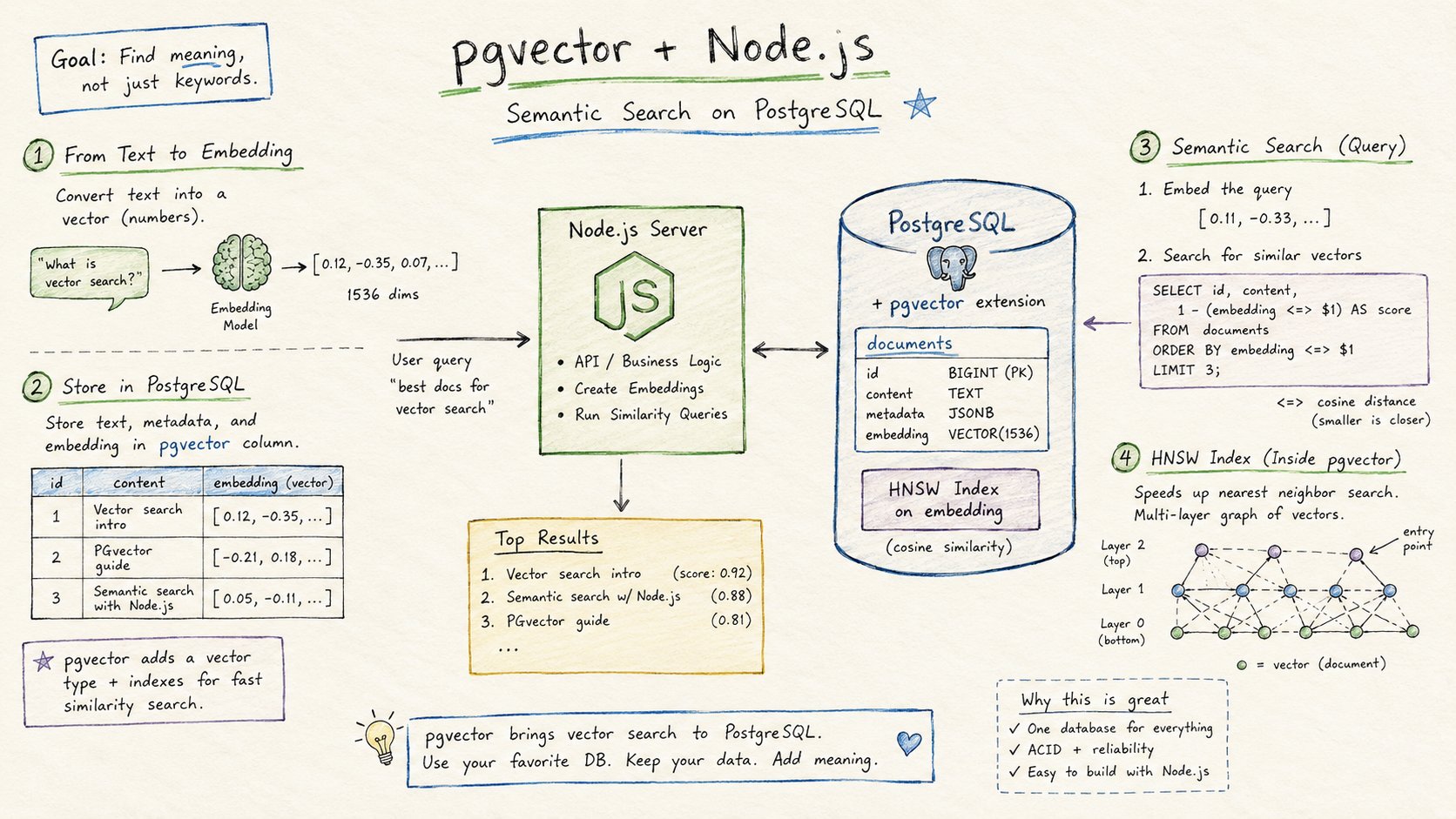

pgvector with Node.js: Build Semantic Search on PostgreSQL

Most pgvector tutorials are written for Python. That makes sense: Python dominates the AI tooling ecosystem and most RAG frameworks are Python-first. But a large share of production web applications are built with Node.js, and those applications need vector search too. If you are building an API with Express, a full-stack app with Next.js, or a background worker with plain Node.js, this guide has everything you need to get pgvector working.

We will cover connecting with node-postgres, inserting and querying embeddings, building an HNSW index, and wiring it all into a functional RAG pipeline. At the end, there is also a section on using Drizzle ORM for TypeScript projects that prefer an ORM layer.

What You Need Before You Start

You need:

- A PostgreSQL database (14 or newer) with the

vectorextension enabled - Node.js 18 or newer

- An OpenAI API key for generating embeddings (or any other embedding provider)

If you are running PostgreSQL locally, install pgvector from the pgvector GitHub repo. On Rivestack, the extension is pre-installed and enabled by default on every database. Connect to your database and run:

CREATE EXTENSION IF NOT EXISTS vector;Verify it is installed:

SELECT extname, extversion FROM pg_extension WHERE extname = 'vector';Installing the Node.js Packages

The core packages you need are pg (node-postgres), the pgvector Node.js client, and the OpenAI SDK:

npm install pg pgvector openaiIf you are using TypeScript (recommended for any serious project), add the type definitions:

npm install --save-dev @types/pg typescriptThe pgvector package provides utilities for encoding and decoding vector values between JavaScript arrays and the PostgreSQL vector type. Without it, you would need to manually serialize arrays to the wire format that PostgreSQL expects, which is error-prone.

Connecting to PostgreSQL

The standard connection setup with node-postgres:

import pg from 'pg';

import { fromSql, toSql } from 'pgvector';

const { Pool } = pg;

const pool = new Pool({

connectionString: process.env.DATABASE_URL,

});

export { pool };Using a Pool rather than a single Client is important for any application that handles concurrent requests. Each query borrows a connection from the pool, runs, and returns the connection. Without pooling, a burst of requests will exhaust PostgreSQL's connection limit.

Creating the Schema

Create a table with a vector column for your embeddings:

async function createSchema(): Promise<void> {

await pool.query(`

CREATE TABLE IF NOT EXISTS documents (

id BIGSERIAL PRIMARY KEY,

content TEXT NOT NULL,

source TEXT,

embedding VECTOR(1536)

)

`);

}The dimension (1536) matches OpenAI's text-embedding-3-small model. Adjust based on the model you use:

| Model | Dimensions |

|---|---|

| OpenAI text-embedding-3-small | 1536 |

| OpenAI text-embedding-3-large | 3072 |

| Cohere embed-v4 | 1024 |

| Google text-embedding-005 | 768 |

| all-MiniLM-L6-v2 (local) | 384 |

The source column is optional but useful for filtering. We will use it later in the metadata filtering section.

Generating and Inserting Embeddings

Install and configure the OpenAI client:

import OpenAI from 'openai';

const openai = new OpenAI({

apiKey: process.env.OPENAI_API_KEY,

});

async function embed(text: string): Promise<number[]> {

const response = await openai.embeddings.create({

model: 'text-embedding-3-small',

input: text,

});

return response.data[0].embedding;

}Insert a document and its embedding:

import { toSql } from 'pgvector';

async function insertDocument(content: string, source?: string): Promise<void> {

const embedding = await embed(content);

await pool.query(

`INSERT INTO documents (content, source, embedding) VALUES ($1, $2, $3)`,

[content, source ?? null, toSql(embedding)]

);

}The toSql call is the key piece here. It converts the JavaScript number[] into the string format that PostgreSQL's vector type expects on input: [0.1, 0.2, ..., 0.9]. Without this conversion, the query will fail with a type error.

For bulk inserts, batch your embedding calls to avoid hitting rate limits:

async function insertDocuments(

docs: Array<{ content: string; source?: string }>

): Promise<void> {

const BATCH_SIZE = 50;

for (let i = 0; i < docs.length; i += BATCH_SIZE) {

const batch = docs.slice(i, i + BATCH_SIZE);

const embeddings = await Promise.all(batch.map((d) => embed(d.content)));

const client = await pool.connect();

try {

await client.query('BEGIN');

for (let j = 0; j < batch.length; j++) {

await client.query(

`INSERT INTO documents (content, source, embedding) VALUES ($1, $2, $3)`,

[batch[j].content, batch[j].source ?? null, toSql(embeddings[j])]

);

}

await client.query('COMMIT');

} catch (err) {

await client.query('ROLLBACK');

throw err;

} finally {

client.release();

}

}

}Wrapping each batch in a transaction keeps inserts atomic and speeds up writes because PostgreSQL flushes to disk once per transaction rather than once per row.

Querying by Vector Similarity

To search for the most relevant documents, generate a query embedding and use pgvector's distance operators in the SQL query:

import { fromSql, toSql } from 'pgvector';

interface SearchResult {

id: number;

content: string;

source: string | null;

similarity: number;

}

async function search(query: string, limit = 5): Promise<SearchResult[]> {

const queryEmbedding = await embed(query);

const result = await pool.query<SearchResult>(

`SELECT

id,

content,

source,

1 - (embedding <=> $1) AS similarity

FROM documents

ORDER BY embedding <=> $1

LIMIT $2`,

[toSql(queryEmbedding), limit]

);

return result.rows;

}pgvector provides three distance operators:

<=>— cosine distance (best for text embeddings)<->— Euclidean (L2) distance<#>— negative inner product

For text embeddings, cosine distance is almost always the right choice. It measures the angle between two vectors and is invariant to their magnitude, which matters because embedding magnitudes vary across documents.

The 1 - (embedding <=> $1) expression converts cosine distance (0 = identical) into cosine similarity (1 = identical), which is more intuitive to display. For actual sorting, you order by the distance, not the similarity.

Adding an HNSW Index

Without an index, PostgreSQL does a full sequential scan through every row in the table for each similarity query. This is fine for a few thousand rows but becomes a bottleneck at scale. pgvector supports two index types: HNSW and IVFFlat. For most applications, HNSW is the better choice.

Create the index after loading your initial data:

CREATE INDEX CONCURRENTLY documents_embedding_idx

ON documents

USING hnsw (embedding vector_cosine_ops)

WITH (m = 16, ef_construction = 64);CONCURRENTLY lets the index build without locking writes to the table. On a large dataset, index construction takes a while; this keeps the table available.

The two key parameters:

m— connections per node in the graph (default 16, range 2 to 100). Higher values mean better recall and more memory.ef_construction— search depth during construction (default 64). Higher values improve index quality at the cost of slower builds.

At query time, you can tune the recall versus speed tradeoff with hnsw.ef_search:

async function searchWithHighRecall(

query: string,

limit = 5

): Promise<SearchResult[]> {

const queryEmbedding = await embed(query);

const client = await pool.connect();

try {

await client.query(`SET LOCAL hnsw.ef_search = 100`);

const result = await client.query<SearchResult>(

`SELECT id, content, source, 1 - (embedding <=> $1) AS similarity

FROM documents

ORDER BY embedding <=> $1

LIMIT $2`,

[toSql(queryEmbedding), limit]

);

return result.rows;

} finally {

client.release();

}

}SET LOCAL scopes the setting to the current transaction. This is safer than SET, which would affect the entire session and potentially other queries running through the same pooled connection.

The default ef_search is 40. For RAG applications where missing a relevant document has real cost, raising it to 100 or 200 is worth the slight latency increase.

Filtering by Metadata

One advantage of pgvector over standalone vector databases is that you can filter with standard SQL WHERE clauses. No separate filter layer, no additional query planner, no schema changes required.

Filter by source to scope results to a specific data category:

async function searchBySource(

query: string,

source: string,

limit = 5

): Promise<SearchResult[]> {

const queryEmbedding = await embed(query);

const result = await pool.query<SearchResult>(

`SELECT id, content, source, 1 - (embedding <=> $1) AS similarity

FROM documents

WHERE source = $2

ORDER BY embedding <=> $1

LIMIT $3`,

[toSql(queryEmbedding), source, limit]

);

return result.rows;

}For multi-tenant applications, replace source with a user_id or tenant_id column. Combine this with PostgreSQL row-level security to enforce tenant isolation at the database level, not the application level.

One nuance: when you add a WHERE clause to a vector similarity query, PostgreSQL may not use the HNSW index if the filter is selective enough to make a sequential scan cheaper. The query planner decides. If you have high-cardinality filters and the planner is not using the index, create a partial index:

CREATE INDEX documents_embedding_wiki_idx

ON documents

USING hnsw (embedding vector_cosine_ops)

WHERE source = 'wikipedia';Building a Complete RAG Pipeline

Retrieval-Augmented Generation connects vector search to a language model. The pattern is: embed the user's question, retrieve the most relevant documents, include those documents as context in the prompt, and ask the model to answer.

Here is a complete, minimal implementation:

import pg from 'pg';

import OpenAI from 'openai';

import { toSql } from 'pgvector';

const pool = new pg.Pool({ connectionString: process.env.DATABASE_URL });

const openai = new OpenAI({ apiKey: process.env.OPENAI_API_KEY });

async function embed(text: string): Promise<number[]> {

const resp = await openai.embeddings.create({

model: 'text-embedding-3-small',

input: text,

});

return resp.data[0].embedding;

}

async function retrieve(query: string, k = 5): Promise<string[]> {

const qEmbed = await embed(query);

const result = await pool.query(

`SELECT content FROM documents ORDER BY embedding <=> $1 LIMIT $2`,

[toSql(qEmbed), k]

);

return result.rows.map((r) => r.content as string);

}

async function answer(question: string): Promise<string> {

const docs = await retrieve(question);

const context = docs.join('\n\n');

const completion = await openai.chat.completions.create({

model: 'gpt-4o-mini',

messages: [

{

role: 'system',

content:

'Answer questions using only the provided context. If the context does not contain the answer, say so.',

},

{

role: 'user',

content: `Context:\n${context}\n\nQuestion: ${question}`,

},

],

});

return completion.choices[0].message.content ?? '';

}

// Usage

const reply = await answer('What is the difference between HNSW and IVFFlat?');

console.log(reply);This is the core pattern. For production, you would add:

- Batched embedding calls for indexing pipelines

- Caching for repeated or similar queries

- A reranking step to improve precision on the top results

- Streaming responses with

openai.chat.completions.stream()

Using pgvector with Drizzle ORM

If your project uses Drizzle ORM (a popular TypeScript ORM), there is native pgvector support via the drizzle-orm and pgvector packages.

Install the dependencies:

npm install drizzle-orm pg pgvector

npm install --save-dev drizzle-kit @types/pgDefine your schema:

import { pgTable, bigserial, text, customType } from 'drizzle-orm/pg-core';

import { sql } from 'drizzle-orm';

const vector = customType<{ data: number[]; driverData: string }>({

dataType(config) {

return `vector(${config?.dimensions ?? 1536})`;

},

toDriver(value: number[]): string {

return `[${value.join(',')}]`;

},

fromDriver(value: string): number[] {

return value

.slice(1, -1)

.split(',')

.map(Number);

},

});

export const documents = pgTable('documents', {

id: bigserial('id', { mode: 'number' }).primaryKey(),

content: text('content').notNull(),

source: text('source'),

embedding: vector('embedding', { dimensions: 1536 }),

});Insert a document:

import { db } from './db';

import { documents } from './schema';

await db.insert(documents).values({

content: 'pgvector adds vector search to PostgreSQL.',

source: 'docs',

embedding: await embed('pgvector adds vector search to PostgreSQL.'),

});Query by similarity with Drizzle (using raw SQL for the vector operators, since Drizzle does not have built-in pgvector operator support yet):

import { sql } from 'drizzle-orm';

async function drizzleSearch(query: string, limit = 5) {

const queryEmbed = await embed(query);

const embeddingStr = `[${queryEmbed.join(',')}]`;

return db

.select({

id: documents.id,

content: documents.content,

similarity: sql<number>`1 - (embedding <=> ${embeddingStr}::vector)`,

})

.from(documents)

.orderBy(sql`embedding <=> ${embeddingStr}::vector`)

.limit(limit);

}The ::vector cast is necessary because Drizzle passes the string as a text literal and PostgreSQL needs the explicit cast to recognize it as a vector.

Next.js Integration

For Next.js Server Actions or API routes, the connection setup is slightly different because serverless and edge environments have short-lived function instances. Avoid creating a new pool on every request:

// lib/db.ts

import pg from 'pg';

declare global {

// eslint-disable-next-line no-var

var pgPool: pg.Pool | undefined;

}

export const pool =

globalThis.pgPool ??

new pg.Pool({ connectionString: process.env.DATABASE_URL });

if (process.env.NODE_ENV !== 'production') {

globalThis.pgPool = pool;

}The globalThis trick prevents Next.js's hot reload from creating a new pool instance on every file change in development, which would exhaust your PostgreSQL connection limit quickly.

In a Next.js Server Action:

'use server';

import { pool } from '@/lib/db';

import { toSql } from 'pgvector';

import { embed } from '@/lib/embeddings';

export async function semanticSearch(query: string) {

const queryEmbedding = await embed(query);

const result = await pool.query(

`SELECT id, content, 1 - (embedding <=> $1) AS similarity

FROM documents

ORDER BY embedding <=> $1

LIMIT 5`,

[toSql(queryEmbedding)]

);

return result.rows;

}Common Mistakes

Forgetting toSql on insert. If you pass a raw JavaScript array as the embedding value, you will get invalid input syntax for type vector. Always call toSql(embedding) before passing it to pool.query.

Passing the embedding twice in similarity queries. The ORDER BY embedding <=> $1 and 1 - (embedding <=> $1) in the SELECT both reference $1. PostgreSQL will compute the distance twice unless you use a subquery or CTE. For most applications this is not a meaningful overhead, but if you are profiling hot queries it is worth knowing.

Creating the HNSW index before loading data. The index will be correct but built on an empty or sparse dataset, resulting in lower recall. Load your data first, then build the index. If you need to add data after the index exists, PostgreSQL maintains it automatically on every insert.

Using SET hnsw.ef_search without LOCAL. A bare SET persists for the entire session. Since connection pools reuse sessions, this can cause earlier settings to bleed into later queries from different requests. Always use SET LOCAL inside a transaction or use SET SESSION intentionally.

Ignoring connection pool size. The default pg.Pool max is 10. For APIs with high concurrent traffic, you may need to raise this. For serverless deployments, consider using an external pooler like PgBouncer or the connection pooler built into Rivestack, which aggregates connections from many function instances into a manageable number of PostgreSQL connections.

Performance Expectations

With an HNSW index and m = 16, ef_construction = 64, you should expect:

- Sub-10ms similarity queries on datasets with millions of vectors

- Index build time of roughly 2 to 5 minutes per million vectors (hardware-dependent)

- Memory usage of approximately 1.5GB per million 1536-dimension vectors in the index

If queries are slower than expected, run EXPLAIN ANALYZE on your similarity query and check whether the index is being used. Look for Index Scan using documents_embedding_idx in the output. If you see Seq Scan, either the index does not match the operator you are using (check vector_cosine_ops vs vector_l2_ops) or a WHERE clause is preventing index use.

Where to Go From Here

You now have a working pgvector setup for Node.js:

node-postgreswithtoSql/fromSqlfor type-safe vector operations- HNSW index with tunable recall at query time

- Metadata filtering using standard SQL

- A complete RAG pipeline skeleton

- Drizzle ORM integration for TypeScript projects

- Next.js-safe connection pool pattern

The next steps depend on your application. For scale, add the hybrid search pattern that combines vector similarity with full-text search. For multi-tenant applications, read the row-level security guide. For production reliability, check the high availability guide.

The operational layer — keeping pgvector updated, tuning autovacuum for tables with frequent updates, managing NVMe storage for fast index traversal — is where self-hosted PostgreSQL gets complicated. If you would rather skip that and focus on the application, Rivestack provides managed PostgreSQL with pgvector pre-installed, connection pooling built in, and NVMe-backed storage. You get the same SQL interface you have just built, without the infrastructure overhead.