Hybrid Search with pgvector and PostgreSQL Full-Text Search

Pure vector search is not always enough. If you have built a semantic search system with pgvector and noticed that exact keyword matches sometimes get buried under loosely related results, you have run into the fundamental limitation of embedding-only retrieval. Hybrid search fixes this by combining vector similarity with traditional keyword matching. In PostgreSQL, you can do both in a single query, without any additional infrastructure.

This guide covers how hybrid search works, how to implement it with pgvector and tsvector, and how to tune the combination for production workloads.

Why Pure Vector Search Falls Short

Vector embeddings capture semantic meaning. A search for "how do I fix a broken pipe" will match documents about plumbing repairs even if none of them contain the exact phrase "broken pipe." That is the strength. The weakness is the same property: vectors generalize, so they also match things that are semantically adjacent but not what the user actually wants.

Consider a search for "Python 3.12 walrus operator." A vector search will find documents about Python syntax, operator precedence, and maybe some 3.11 release notes. But the user wants something specific about 3.12 specifically, and they want it about the walrus operator specifically. An exact keyword match on "walrus operator" would outperform the vector search here.

This is not a pathological edge case. It shows up regularly with:

- Version-specific queries ("react 19 use hook", "postgres 16 logical replication")

- Proper nouns and brand names ("LangChain", "Pinecone", "pgbouncer")

- Code symbols and function names (

pg_stat_statements,CREATE INDEX CONCURRENTLY) - Rare technical terms that embeddings have seen only a few times in training

Full-text search handles these cases well because it operates on exact tokens, not meaning. Hybrid search gets you both.

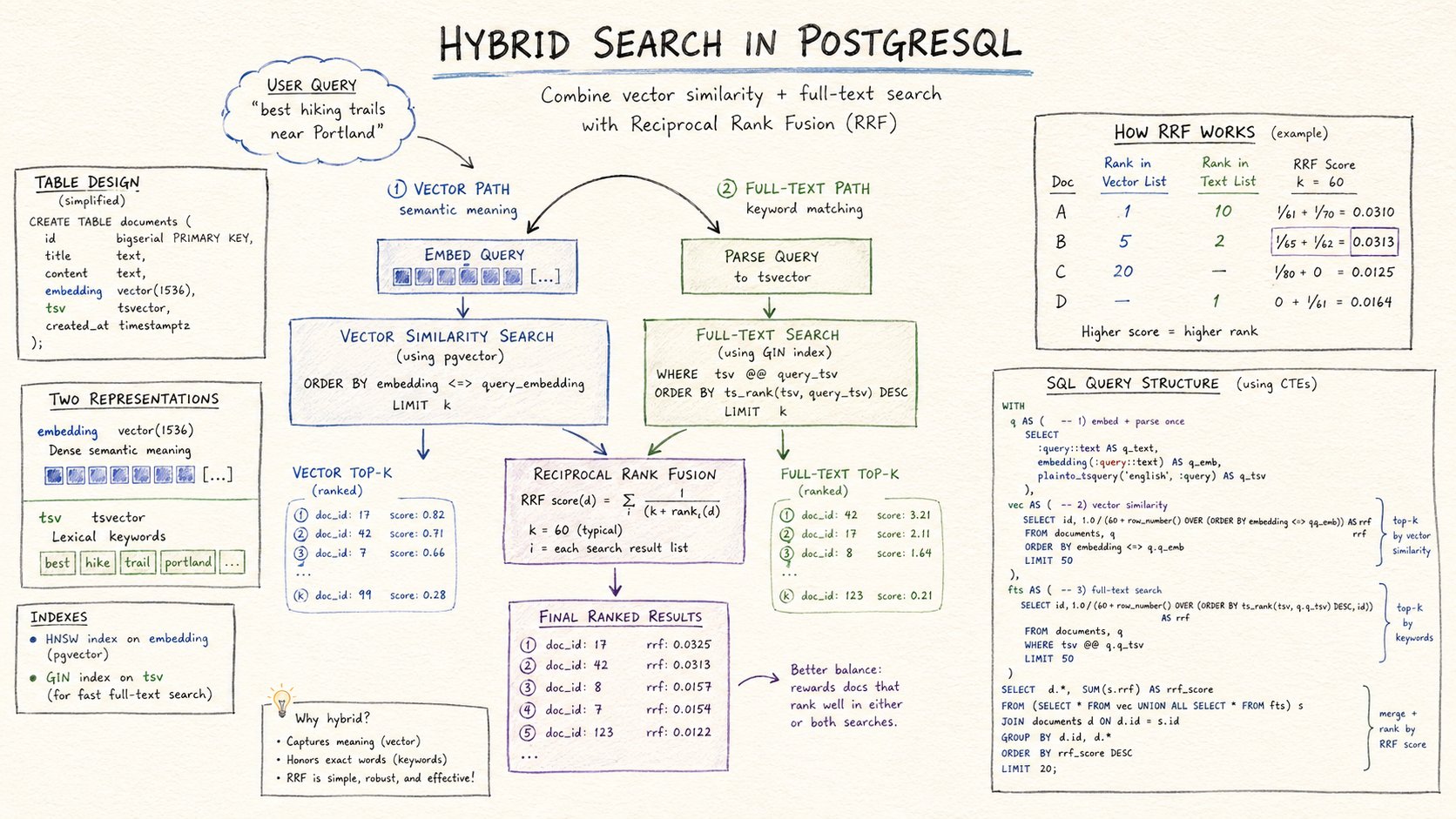

How Hybrid Search Works

The core idea is simple: run both searches, score the results from each independently, then combine the scores into a single ranked list.

The tricky part is that vector similarity scores and text search ranking scores live on completely different scales. Cosine similarity is bounded between 0 and 1 (or -1 and 1 depending on implementation). ts_rank produces unbounded positive floats. You cannot add them directly.

The most common solution is Reciprocal Rank Fusion (RRF). Instead of combining raw scores, you combine rankings. Each result gets a score of 1 / (k + rank) where k is a smoothing constant (usually 60). A document ranked 1st by vector search and 3rd by text search will score higher than one ranked 5th by both. RRF is robust, parameter-free beyond choosing k, and works well in practice.

The alternative is score normalization: rescale both scores to [0, 1] and then take a weighted sum. This is more tunable but requires knowing the distribution of scores in advance, which changes as your data grows.

This guide will implement both, starting with RRF.

Schema Setup

Start with a table that stores both the text content and the embedding:

CREATE TABLE documents (

id BIGSERIAL PRIMARY KEY,

content TEXT NOT NULL,

embedding VECTOR(1536),

fts TSVECTOR GENERATED ALWAYS AS (to_tsvector('english', content)) STORED,

created_at TIMESTAMPTZ DEFAULT now()

);The fts column uses a generated column so the tsvector is always in sync with content. No triggers, no manual updates.

Create indexes for both retrieval paths:

-- For vector similarity search

CREATE INDEX ON documents USING hnsw (embedding vector_cosine_ops);

-- For full-text search

CREATE INDEX ON documents USING gin (fts);The HNSW index gives you approximate nearest neighbor search with good recall. The GIN index accelerates tsvector queries with @@ operator.

Inserting Documents

import psycopg

from openai import OpenAI

client = OpenAI()

def embed(text: str) -> list[float]:

response = client.embeddings.create(

model="text-embedding-3-small",

input=text

)

return response.data[0].embedding

def insert_document(conn, content: str):

embedding = embed(content)

with conn.cursor() as cur:

cur.execute(

"INSERT INTO documents (content, embedding) VALUES (%s, %s)",

(content, embedding)

)

conn.commit()The fts column is auto-populated by PostgreSQL, so you only need to insert content and embedding.

Reciprocal Rank Fusion Query

Here is the full hybrid search query using RRF:

WITH vector_search AS (

SELECT

id,

content,

ROW_NUMBER() OVER (ORDER BY embedding <=> $1) AS rank

FROM documents

ORDER BY embedding <=> $1

LIMIT 20

),

text_search AS (

SELECT

id,

content,

ROW_NUMBER() OVER (ORDER BY ts_rank(fts, query) DESC) AS rank

FROM documents, plainto_tsquery('english', $2) query

WHERE fts @@ query

ORDER BY ts_rank(fts, query) DESC

LIMIT 20

),

combined AS (

SELECT

COALESCE(v.id, t.id) AS id,

COALESCE(v.content, t.content) AS content,

COALESCE(1.0 / (60 + v.rank), 0) +

COALESCE(1.0 / (60 + t.rank), 0) AS rrf_score

FROM vector_search v

FULL OUTER JOIN text_search t ON v.id = t.id

)

SELECT id, content, rrf_score

FROM combined

ORDER BY rrf_score DESC

LIMIT 10;The parameters are:

$1: the query embedding (a vector)$2: the search query text (a string)

Both searches fetch the top 20 results independently. The FULL OUTER JOIN captures documents that appear in one result set but not the other. A document only found by vector search still gets a score, it just has no contribution from the text search side.

Python Implementation

def hybrid_search(conn, query: str, top_k: int = 10) -> list[dict]:

query_embedding = embed(query)

sql = """

WITH vector_search AS (

SELECT

id,

content,

ROW_NUMBER() OVER (ORDER BY embedding <=> %s::vector) AS rank

FROM documents

ORDER BY embedding <=> %s::vector

LIMIT 20

),

text_search AS (

SELECT

id,

content,

ROW_NUMBER() OVER (ORDER BY ts_rank(fts, query) DESC) AS rank

FROM documents, plainto_tsquery('english', %s) query

WHERE fts @@ query

ORDER BY ts_rank(fts, query) DESC

LIMIT 20

),

combined AS (

SELECT

COALESCE(v.id, t.id) AS id,

COALESCE(v.content, t.content) AS content,

COALESCE(1.0 / (60 + v.rank), 0.0) +

COALESCE(1.0 / (60 + t.rank), 0.0) AS rrf_score

FROM vector_search v

FULL OUTER JOIN text_search t ON v.id = t.id

)

SELECT id, content, rrf_score

FROM combined

ORDER BY rrf_score DESC

LIMIT %s;

"""

with conn.cursor() as cur:

cur.execute(sql, (query_embedding, query_embedding, query, top_k))

rows = cur.fetchall()

return [{"id": r[0], "content": r[1], "score": float(r[2])} for r in rows]Note that the embedding vector is passed twice in the parameter list because it appears in both the ORDER BY and LIMIT subqueries. This is a small redundancy, but it keeps the query readable.

Score Normalization Alternative

If you want more control over the weighting between vector and text results, normalize both scores to [0, 1] and apply a weight:

WITH vector_search AS (

SELECT

id,

content,

1 - (embedding <=> $1) AS similarity -- cosine similarity, 0 to 1

FROM documents

ORDER BY embedding <=> $1

LIMIT 20

),

text_search AS (

SELECT

id,

content,

ts_rank(fts, plainto_tsquery('english', $2)) AS text_score

FROM documents

WHERE fts @@ plainto_tsquery('english', $2)

ORDER BY text_score DESC

LIMIT 20

),

normalized_vector AS (

SELECT id, content,

(similarity - MIN(similarity) OVER ()) /

NULLIF(MAX(similarity) OVER () - MIN(similarity) OVER (), 0) AS score

FROM vector_search

),

normalized_text AS (

SELECT id, content,

(text_score - MIN(text_score) OVER ()) /

NULLIF(MAX(text_score) OVER () - MIN(text_score) OVER (), 0) AS score

FROM text_search

),

combined AS (

SELECT

COALESCE(v.id, t.id) AS id,

COALESCE(v.content, t.content) AS content,

0.7 * COALESCE(v.score, 0) + 0.3 * COALESCE(t.score, 0) AS hybrid_score

FROM normalized_vector v

FULL OUTER JOIN normalized_text t ON v.id = t.id

)

SELECT id, content, hybrid_score

FROM combined

ORDER BY hybrid_score DESC

LIMIT 10;The weights 0.7 and 0.3 favor vector search. Adjust them based on your data. If users frequently search for exact terms, shift more weight to text. If your content is highly semantic (support articles, research papers, narrative documents), keep more weight on the vector side.

The downside of normalization is that it depends on the current result set. The "max similarity" in a given query window is not a stable anchor. RRF avoids this problem entirely, which is why it tends to be preferred in practice.

Handling the Case Where Text Search Returns Nothing

When the query text matches no documents (rare terms, misspellings, code syntax), the text search side of the FULL OUTER JOIN contributes nothing. The query gracefully degrades to pure vector search. You get the top results from the vector side, all with the maximum possible 1.0 / (60 + rank) score, ranked by their embedding similarity alone.

This fallback behavior is a significant advantage of the RRF approach: it degrades gracefully without any conditional logic in your application code.

Adding Metadata Filtering

Real applications almost always need to filter results to a subset of documents. User-specific data, tenant isolation, document type filters. Add a filter clause to both subqueries:

WITH vector_search AS (

SELECT

id,

content,

ROW_NUMBER() OVER (ORDER BY embedding <=> $1) AS rank

FROM documents

WHERE user_id = $3 -- filter applied here

ORDER BY embedding <=> $1

LIMIT 20

),

text_search AS (

SELECT

id,

content,

ROW_NUMBER() OVER (ORDER BY ts_rank(fts, query) DESC) AS rank

FROM documents, plainto_tsquery('english', $2) query

WHERE fts @@ query

AND user_id = $3 -- and here

ORDER BY ts_rank(fts, query) DESC

LIMIT 20

),

...For pgvector, pre-filtering (applying the WHERE before ANN search) is safe when the filtered set is a meaningful fraction of the total table. If the filter is very selective (returning less than 1% of rows), consider a partial HNSW index:

CREATE INDEX ON documents USING hnsw (embedding vector_cosine_ops)

WHERE user_id = 42;This creates a separate index covering only the documents for that user. It is only worth it for very high-volume users.

Tuning the Candidate Set Size

The LIMIT 20 in each subquery controls how many candidates each retrieval method contributes. Increasing this improves recall but increases query cost. Decreasing it makes queries faster but risks missing relevant results.

A good starting point is 20 candidates per side. Monitor your actual recall by checking whether the correct document appears in the top 10 combined results for known queries. If it often appears at positions 15 to 20 in the individual result sets, consider increasing to 40 or 50.

The RRF denominator k = 60 is a convention from the original paper. It controls how much the tail of rankings is penalized relative to the top. Larger k makes the ranking more democratic (small differences in rank matter less). Smaller k amplifies the difference between position 1 and position 2.

Integrating with LangChain

If you are building a RAG pipeline with LangChain, you can wrap the hybrid search function in a custom retriever:

from langchain_core.retrievers import BaseRetriever

from langchain_core.documents import Document

from langchain_core.callbacks import CallbackManagerForRetrieverRun

from typing import List

class HybridPGRetriever(BaseRetriever):

conn: object

embed_fn: object

top_k: int = 10

def _get_relevant_documents(

self,

query: str,

*,

run_manager: CallbackManagerForRetrieverRun

) -> List[Document]:

query_embedding = self.embed_fn(query)

results = hybrid_search_with_embedding(self.conn, query_embedding, query, self.top_k)

return [Document(page_content=r["content"], metadata={"id": r["id"]}) for r in results]Plug this into any LangChain chain that accepts a retriever, including create_retrieval_chain and RetrievalQA.

Benchmarking Vector vs Text vs Hybrid

For any non-trivial search application, measure all three approaches on your actual query set before committing to a configuration.

A basic evaluation setup:

- Collect 100 to 200 real queries from users or representative test cases

- For each query, manually label the top 5 relevant documents (or use a gold-standard dataset if you have one)

- Run all three retrieval methods and compute NDCG@10 or precision@5

You will almost always find:

- Vector search wins on paraphrase and semantic queries

- Full-text search wins on exact term and code queries

- Hybrid search wins overall, even when it is not the best on either category individually

This is the key insight behind hybrid search: combining two imperfect rankers often beats either one alone, because their errors are uncorrelated.

Performance Considerations

A hybrid query is more expensive than a single vector or text query, but not dramatically so. On a table with 1 million rows and an HNSW index, a single vector search takes roughly 5 to 15ms. A hybrid query that runs both sides adds another 5 to 10ms for the GIN text search and a small amount for the join. Total latency is typically 15 to 30ms for well-indexed tables.

The dominant cost in production is usually the embedding call to your embedding model (50 to 150ms for OpenAI), not the Postgres query itself. Batch embedding requests where possible and cache embeddings for repeated queries.

If query latency is still too high:

- Reduce the candidate limit from 20 to 10

- Use an HNSW index with lower

ef_construction(faster build and query, slightly lower recall) - Materialize

ts_rankvalues for common queries into a covering index

Running on Managed PostgreSQL

All of the above works on any PostgreSQL 15 or later instance with the vector extension. On Rivestack, both vector and the GIN index machinery are available out of the box.

The only operational consideration is index maintenance. HNSW indexes do not rebalance automatically. On write-heavy workloads where document content changes frequently, monitor index bloat with:

SELECT pg_size_pretty(pg_relation_size('documents_embedding_idx'));If the index grows significantly larger than the actual data, run REINDEX CONCURRENTLY to rebuild it without taking a lock:

REINDEX INDEX CONCURRENTLY documents_embedding_idx;Summary

Hybrid search in PostgreSQL combines the semantic range of vector embeddings with the precision of full-text search. The implementation is a SQL query, not an additional service. The key points:

- Use Reciprocal Rank Fusion to combine rankings without normalizing scores

- Generate

tsvectorcolumns as stored generated columns so indexing stays automatic - Apply metadata filters in both subqueries, not just one

- Start with 20 candidates per side and tune based on recall measurements

- Expect total query latency of 15 to 30ms on indexed tables with up to a few million rows

The result is a retrieval system that handles both "what does connection pooling do" and "pgbouncer max_client_conn setting" correctly, which pure vector search alone cannot do reliably.

If you are building on Rivestack, managed pgvector is enabled by default. Both the vector extension and the GIN index support needed for hybrid search are available without any configuration.